In 2019, a team at OpenAI finally achieved a goal they had been pursuing for two years: teaching a robot arm to solve the Rubik’s Cube. There are several extraordinary implications of this feat as well as some practical lessons.

While robots can be programmed to manipulate objects in a routine way, working on an assembly line, for example, they have, until recently, struggled to do anything as relatively simple as sort and place different shaped boxes and put them on a pallet or pick a pencil out of a pile of random objects. Teaching robots to master such tasks is critical to the eventual development of general-purpose robots. The level of dexterity exhibited by OpenAI’s robot arm was an important step in this direction.

Interestingly, OpenAI taught their robot via simulation. While it has become common practice to train neural networks in simulations (including video games), there is an inherent problem in this approach: You cannot create a perfect model of the real world where the robot eventually has to operate. To address this issue, OpenAI relied on automatic domain randomization (ADR). That is, they employed an algorithm that automatically introduced random changes into the training simulation. One of the factors they randomized was the size of the Rubik’s Cube. By introducing the arm to different sized cubes, they were able to teach the arm something about the physics of the actual cube.

This approach also prepared the robot arm to deal with distractions such as wearing a glove or being nudged by a foreign object as it worked on the cube. Researchers postulate that the ADR training “leads to emergent metalearning, where the network implements a learning algorithm that allows itself to rapidly adapt its behavior to the environment it is deployed in.”

Vision Is Key for Robot Autonomy Another key to robot autonomy is the ability for robots to visually navigate and interact with their surroundings. A good example of this is AEye’s Intelligent Detection and Ranging technology, which enables vehicles to see, classify, and respond to objects in real time.

Improvements in vision have led to a wide range of robotbased applications, such as security (thanks to improved facial recognition capabilities), public safety (through the detection of potentially harmful objects in public spaces), and manufacturing (in quality control systems).

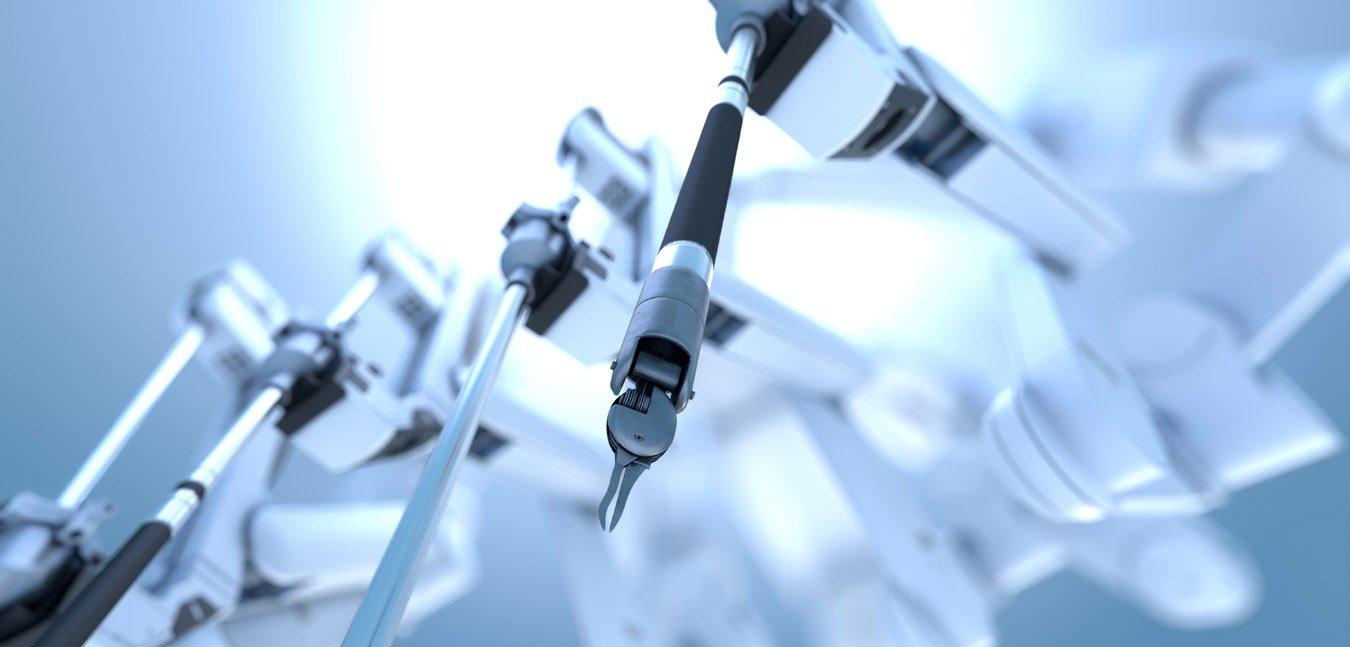

Computer vision and other forms of robotics are also having a revolutionary impact on healthcare. In one instance, scientists were able to train a neural network to read CT scan images and identify neurological disorders faster than humans can. In another, they trained an AI to more quickly diagnose certain cancers.

Beyond these powerful diagnostic use cases, we see robotics playing an even more direct role in patient care. These applications range from minimally invasive robotic surgery to remote presence robots that allow doctors to interact with patients without geographical constraint, to interactive robots that can help relieve patient stress.

This impact will be felt in terms of the level of autonomy consumers expect in their products as well as the level of autonomy we feel comfortable as a society building into our products. For example…

As it evolves, autonomous technology will not only influence the types of products we produce, products which we will increasingly need to design either to interact with or enhance autonomous systems, but it will influence our architecture, our workplaces, and the urban environment itself.